Back to BlogAI Models

GLM-5 Coding Benchmark - Coding performance benchmark

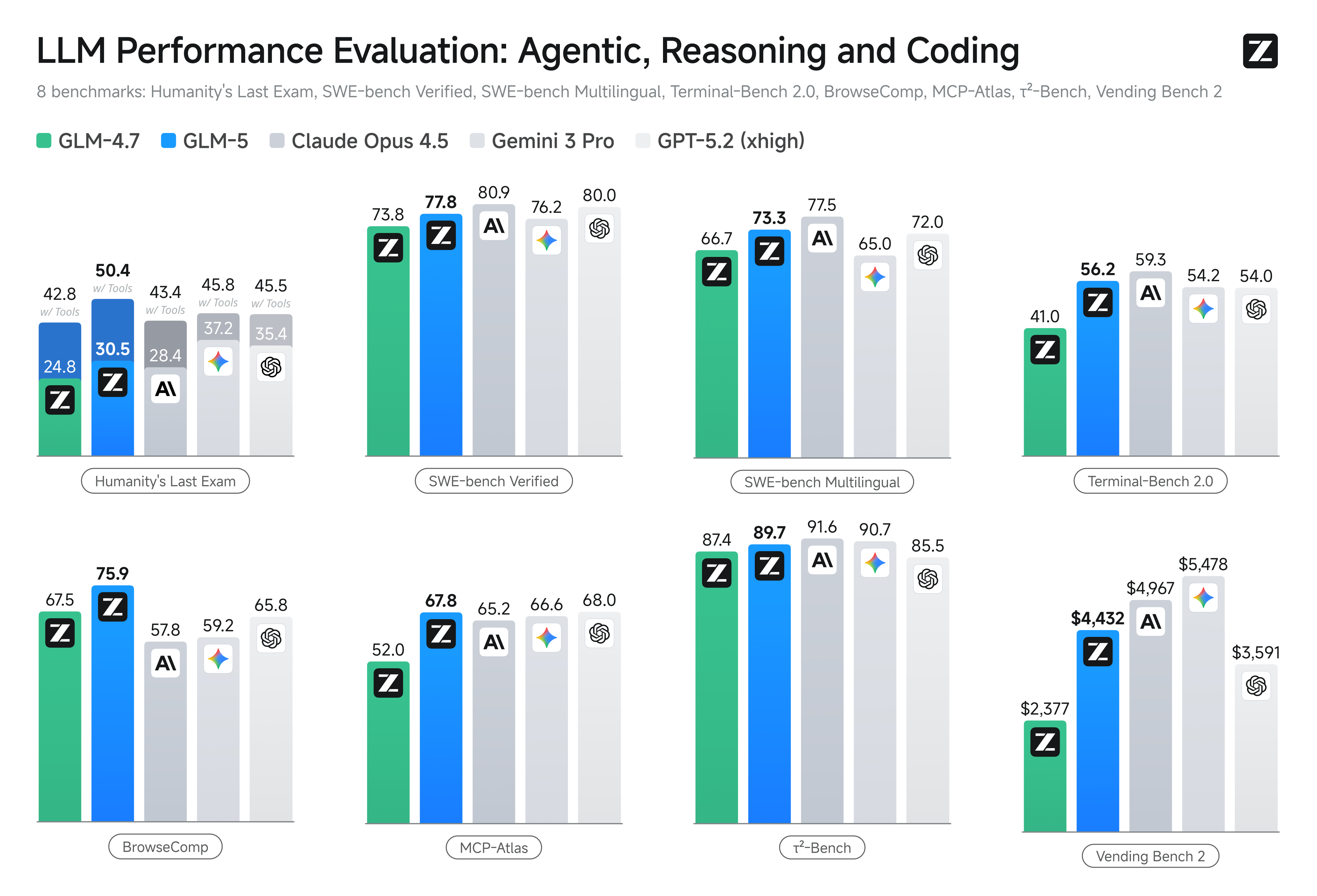

GLM-5 Coding Benchmark - Coding performance benchmark

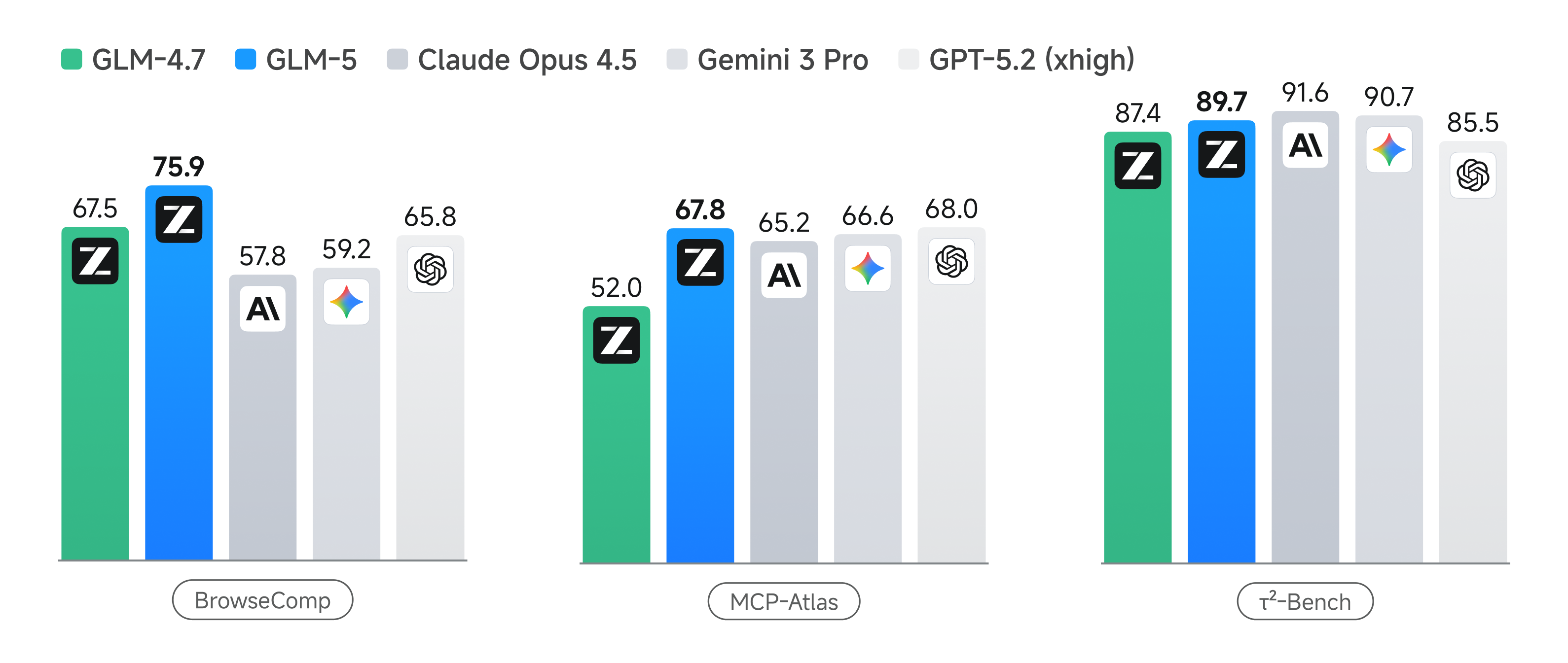

GLM-5 Agent Benchmark - Agent performance benchmark

GLM-5 Agent Benchmark - Agent performance benchmark

GLM-5 Release Review: 745B MoE Architecture and Claude Code Compatibility

5.735min

GLM-5Zhipu AIMoAI-ADKClaude CodeMoE ArchitectureLLM

Zhipu AI's first annual flagship model GLM-5 has been released. We analyze the 745B MoE architecture, 202K token context window, DeepSeek sparse attention mechanism, and explain how to integrate with moai-adk 2.2.7.

Overview

February 2026 marks the release of GLM-5, Zhipu AI's first annual flagship model. This release represents more than a simple model update—it demonstrates significant progress in LLM architecture.

This document analyzes the technical features and performance of GLM-5 and explains how to leverage GLM-5 in Claude Code through MoAI-ADK.

1. GLM-5 Technical Architecture

1.1 Core Technical Specifications

The most notable feature of GLM-5 is its 745B parameter MoE (Mixture of Experts) architecture. Unlike traditional dense models, MoE dynamically selects which expert groups to activate, maximizing inference efficiency.

Loading diagram...

MoE Architecture - Mixture of Experts architecture operation

The key advantages of this architecture are:

- Efficient Inference: Only a subset of parameters are activated per token

- Specialized Learning: Expert groups specialize in specific domains

- Scalability: Linear performance improvements with model size

1.2 Context Window Expansion

GLM-5 supports a 202K token context window. This represents approximately 150,000 words, enabling the following tasks in a single session:

- Analysis of entire large codebases

- Consistent processing of hundreds of pages of technical documentation

- Complex multi-file refactoring

1.3 Sparse Attention Mechanism

The sparse attention mechanism, inspired by DeepSeek, optimizes token relationship computation for improved efficiency in long context processing.

Loading diagram...

Sparse Attention Flow - Sparse attention mechanism processing flow

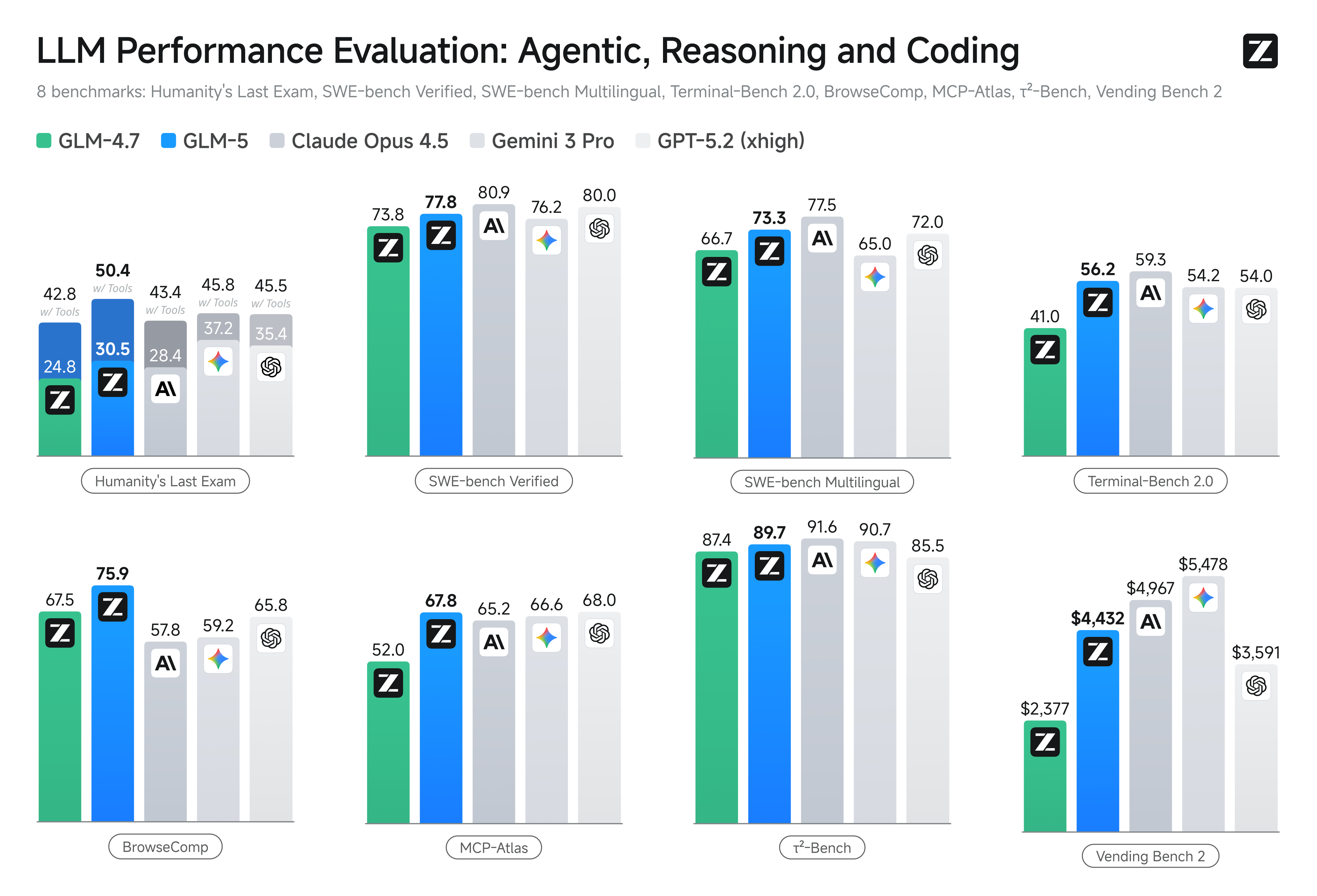

2. Performance Benchmark Analysis

2.1 Coding Performance

GLM-5 demonstrates open-source SOTA (State-of-the-Art) coding performance. It particularly achieves performance close to Claude Opus 4.5 in real-world programming tasks.

GLM-5 Coding Benchmark - Coding performance benchmark

GLM-5 Coding Benchmark - Coding performance benchmarkBenchmark results show the following characteristics:

- Agent Task Optimization: High accuracy in complex multi-step code generation

- Real-world Scenario Enhancement: Performance optimized for actual development projects

- Language Balance: Consistent performance across various programming languages

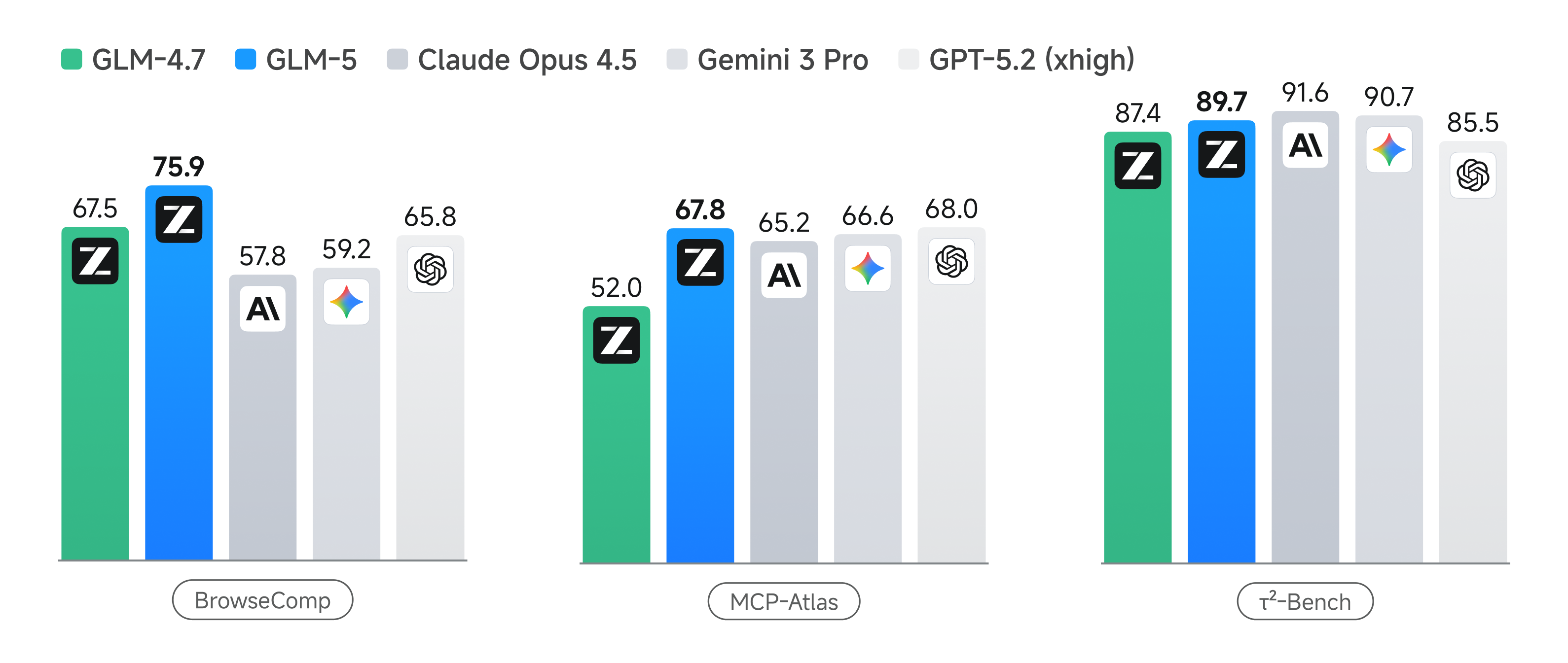

2.2 Agent Performance

GLM-5 shows particular strength in agent-based tasks. Tool usage, planning capabilities, and context retention are comprehensively evaluated.

GLM-5 Agent Benchmark - Agent performance benchmark

GLM-5 Agent Benchmark - Agent performance benchmark3. Claude Code Compatibility

3.1 One-Click Integration

GLM-5 features full Claude Code compatibility. Through Z.AI's DevPack, you can use GLM-5 in Claude Code without code modifications.

The core of this compatibility is the OpenAI API compatibility layer. GLM-5 supports the standard OpenAI API endpoint format, simplifying integration with Claude Code.

3.2 SDK and API Support

GLM-5 supports various developer tools.

Official Python SDK (zai-sdk)

Python

from zai import ZAIclient = ZAI(api_key="your-api-key")response = client.chat.completions.create(model="glm-5",messages=[{"role": "user", "content": "Explain MoE architecture"}],thinking=True # Enable reasoning mode)

OpenAI Python SDK Compatible

Python

from openai import OpenAIclient = OpenAI(base_url="https://api.z.ai/api/paas/v4",api_key="your-zai-api-key")response = client.chat.completions.create(model="glm-5",messages=[...])

Java SDK

Java

ZAIClient client = new ZAIClient("your-api-key");ChatRequest request = ChatRequest.builder().model("glm-5").messages(messages).thinking(true).build();ChatResponse response = client.chat(request);

3.3 Thinking Parameter

GLM-5 supports the

thinking parameter. When enabled, this parameter exposes the model's reasoning process, useful for debugging and verification tasks.| Parameter | Type | Description |

|---|---|---|

thinking | boolean | Whether to expose reasoning process |

max_tokens | integer | Maximum output tokens |

temperature | float | Output randomness control |

4. MoAI-ADK Integration

4.1 moai glm Command

Starting from MoAI-ADK 2.2.7, GLM-5 is supported. Use the

moai glm command to switch Claude Code's LLM backend to GLM-5.Bash

# Initial setup (save API key)moai glm sk-xxx-your-api-key# Switch with saved keymoai glm# Switch back to Claudemoai cc

This command automatically performs the following tasks:

- Saves API key securely to

~/.moai/.env.glm(0o600 permissions) - Injects environment variables into

.claude/settings.local.json - Automatically adds API key file to

.gitignore

4.2 MoAI-ADK Installation

MoAI-ADK is distributed as a single Go binary. Installation is straightforward.

Bash

# macOS / Linux / WSLcurl -fsSL https://raw.githubusercontent.com/modu-ai/moai-adk/main/install.sh | bash# Windows PowerShellirm https://github.com/modu-ai/moai-adk/raw/main/install.ps1 | iex

After installation, initialize your project.

Bash

moai init my-projectcd my-project

4.3 Activating GLM-5

The procedure to activate GLM-5 in your project is as follows.

Loading diagram...

GLM-5 Setup Process - GLM-5 configuration process

Command execution example:

Bash

# Switch with API keymoai glm sk-xxx-your-zai-api-key# Output# GLM API key saved to ~/.moai/.env.glm# Switched to GLM backend.# Environment variables injected into .claude/settings.local.json# Run 'moai cc' to switch back to Claude.

The generated settings file structure is as follows.

JSON

{"env": {"ANTHROPIC_AUTH_TOKEN": "${GLM_API_KEY}","ANTHROPIC_BASE_URL": "https://api.z.ai/api/anthropic","API_TIMEOUT_MS": "3000000"}}

5. Pricing Policy

5.1 GLM Coding Plan

Z.AI offers the GLM Coding Plan in three tiers. This represents significant cost savings compared to Claude Code Max at $80/month.

| Service | Monthly | Annual | Key Features |

|---|---|---|---|

| GLM Coding Lite | $10 | $120 | Entry-level, ~80 prompts per 5-hour cycle |

| GLM Coding Pro | $30 | $360 | Active developers, ~400 prompts per 5-hour cycle |

| GLM Coding Max | $80 | $960 | Heavy users, ~1,600 prompts per 5-hour cycle, GLM-5 support |

| Claude Code Max | $80 | $960 | Anthropic official, 1M token context |

5.2 Cost Efficiency Analysis

The cost savings from using GLM Coding Plan are as follows:

- 87.5% Cost Reduction: Lite plan saves ~$840 annually vs. Claude Code Max

- 62.5% Cost Reduction: Pro plan saves ~$600 annually vs. Claude Code Max

- Same Price, More Usage: Max plan offers ~1,600 prompts per 5-hour cycle at the same $80

- Equal Performance: Near Opus 4.5 performance in real programming tasks

Conclusion

GLM-5, as Zhipu AI's first annual flagship model, demonstrates significant technical progress. The 745B MoE architecture and 202K token context window provide powerful tools for large-scale development work.

Key Takeaways:

- Architecture Innovation: 745B MoE achieves balance between inference efficiency and performance

- Claude Code Compatibility: One-click integration via MoAI-ADK eliminates complex configuration

- Cost Efficiency: Starting from Lite $10, up to 87.5% savings vs. Claude Code Max

- Real-world Performance: SOTA-level performance in coding and agent tasks

Recommended Next Steps:

- Get API key from Z.AI subscription page

- Install MoAI-ADK:

curl -fsSL https://raw.githubusercontent.com/modu-ai/moai-adk/main/install.sh | bash - Switch to GLM-5:

moai glm sk-xxx-your-api-key - Restart Claude Code and start developing

The combination of GLM-5 and MoAI-ADK provides a cost-effective, high-performance AI development environment.