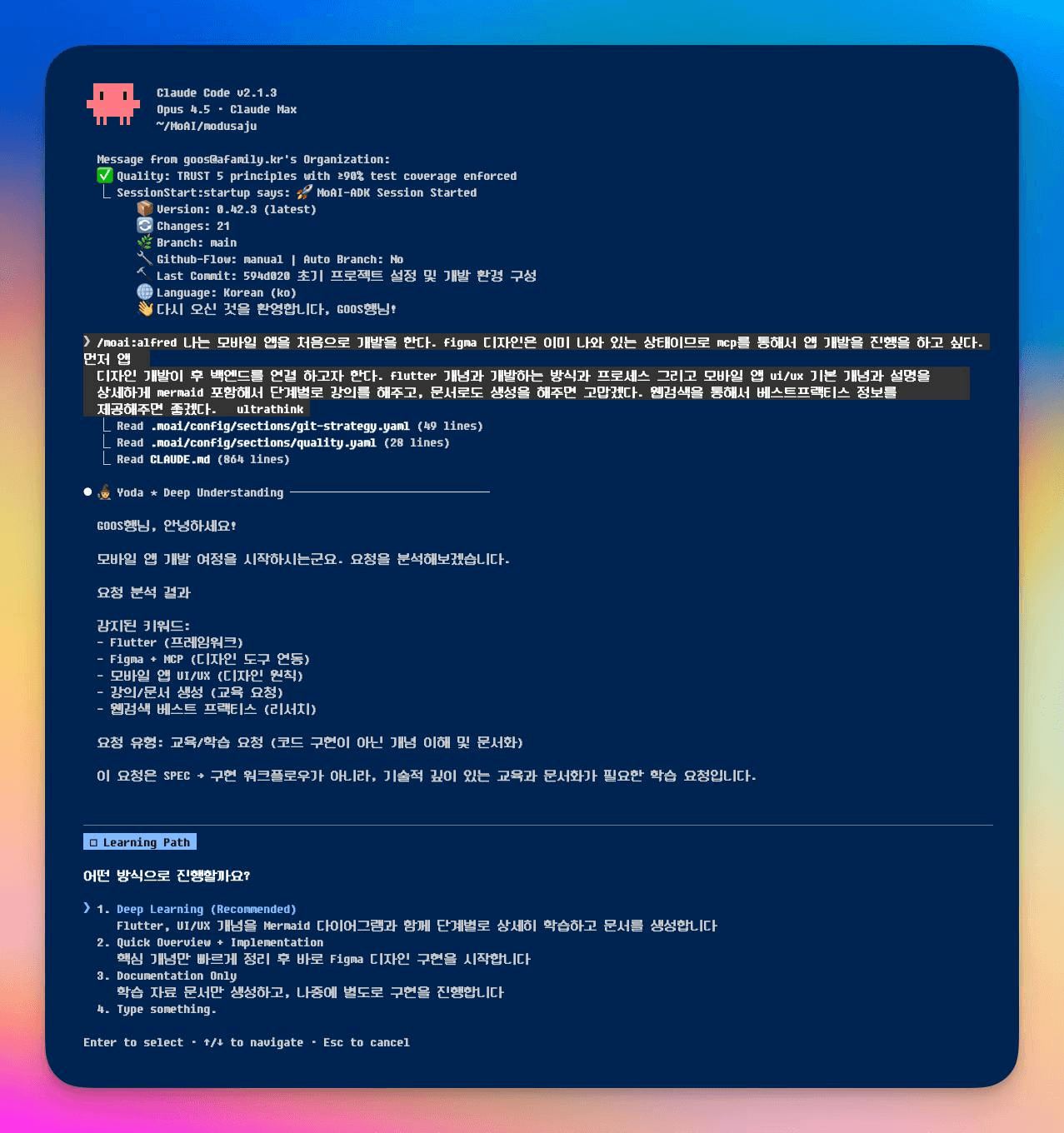

GLM-5 Release Review: 745B MoE Architecture and Claude Code Compatibility

Zhipu AI's first annual flagship model GLM-5 has been released. We analyze the 745B MoE architecture, 202K token context window, DeepSeek sparse attention mechanism, and explain how to integrate with moai-adk 2.2.7.

![[EP1] The Evolution of AI Coding Tools - Why MoAI-ADK?](/_next/image?url=%2Fimages%2Fposts%2F2026%2F01%2Fmoai-adk-ai-coding-evolution-ep1%2Fcard.png&w=3840&q=75&dpl=dpl_vJpJEXdSnzWM7KhHJjRA1couEubd)

![[EP4] MoAI-ADK Core Technology Deep Dive - alfred, Ralph Engine, Skills System](/_next/image?url=%2Fimages%2Fposts%2F2026%2F01%2Fmoai-adk-core-technology-ep4%2Fcard.png&w=3840&q=75&dpl=dpl_vJpJEXdSnzWM7KhHJjRA1couEubd)

![[EP3] oh-my-opencode vs MoAI-ADK - Enhancement Layer In-Depth Analysis](/_next/image?url=%2Fimages%2Fposts%2F2026%2F01%2Fmoai-adk-oh-my-opencode-vs-moai-ep3%2Fcard.png&w=3840&q=75&dpl=dpl_vJpJEXdSnzWM7KhHJjRA1couEubd)

![[EP5] The Future of AI Coding in 2026 - Scenario-based Recommendations and MoAI-ADK Roadmap](/_next/image?url=%2Fimages%2Fposts%2F2026%2F01%2Fmoai-adk-future-roadmap-ep5%2Fcard.png&w=3840&q=75&dpl=dpl_vJpJEXdSnzWM7KhHJjRA1couEubd)